I do not have much use for notions like “objective value” and “objective truth.”

—Richard Rorty

The man who cannot believe his senses, and the man who cannot believe anything else, are both insane, but their insanity is proved not by any error in their argument, but by the manifest mistake of their whole lives.

—G. K. Chesterton

In the foreword to his magnum opus, European Literature and the Latin Middle Ages (1948), the great German scholar Ernst Robert Curtius noted that his book, for all its daunting scholarship, was “not the product of purely scholarly interests.” Rather, it

grew out of a concern for the preservation of Western culture. It seeks to serve an understanding of the Western cultural tradition in so far as it is manifested in literature. It attempts to illuminate the unity of that tradition … by the application of new methods. … In the intellectual chaos of the present it has become necessary … to demonstrate that unity. But the demonstration can only be made from a universal standpoint. Such a standpoint is afforded by Latinity.

It would not be easy to find a passage more at odds, in tone or substance, with the sensibilities that dominate the cultural landscape today. Curtius’s talk of “new methods” might at first elicit some enthusiasm from trendy advocates of literary theory: after all, the word “method” can be counted on to affect them the way a ringing bell affected Pavlov’s dog. In fact, though, they would find Curtius’s patient investigation of literary topoi—for that was what he meant by “new methods”—hopelessly retardataire. And Curtius’s method is the least of what sets him apart from the reigning sensibilities. Much more important is his quiet but determined concern for the “preservation of Western culture,” his effort to exhibit the “unity of that tradition,” his faith in a “universal standpoint” from which the “intellectual chaos” of his time could be effectively addressed, even his turn to Latin, “the language of the educated during the thirteen centuries which lie between Virgil and Dante”: all mark him as an oddity in the present intellectual climate.

Today, the very idea that there might be something distinctive about the Western cultural tradition—something, moreover, eminently worth preserving—is under attack on several fronts. Multiculturalists in the academy and other cultural institutions–museums, foundations, the entertainment industry—busy themselves denouncing the West as racist, sexist, imperialistic, and ethnocentric. The cultural unity that Curtius celebrates is challenged by intellectual segregationists eager to champion relativism and to attack what they see as the “hegemony” of Eurocentrism in art, literature, philosophy, politics, and even in science. The ideal of a “universal standpoint” from which the achievements of the West may be understood and disseminated is derided by partisans of cultural studies and neo-pragmatism as parochial and, worse, dangerously “foundationalist.”

Today, the very idea that there might be something distinctive about the Western cultural tradition is under attack on several fronts.

Writing in the immediate aftermath of World War II, Curtius naturally had the assaults of totalitarianism chiefly in mind when he spoke of the “intellectual chaos” of his time. Today’s cultural commissars do not—not yet, anyway—control any governments or police forces. But the intellectual and spiritual chaos they endorse is potentially as disruptive and paralyzing as any brand of nihilism. The political philosopher Hannah Arendt once described totalitarianism as an “experiment against reality.” Among other things, she had in mind the peculiar mixture of gullibility and cynicism that totalitarian movements inspire, an amalgam that fosters an intellectual twilight in which people believe “everything and nothing, think that everything was possible and nothing was true.” It is in this sense that the cultural relativists of today display a totalitarian cast of mind. Their efforts to disestablish the intellectual tradition of the West are so many experiments against reality: the reality of our cultural and spiritual legacy.

It is a simple matter to give examples and describe the effects of such experiments against reality. But uncovering their precise nature—what, finally, motivates them, what they portend—is more difficult. They are as amorphous as they are widespread and virulent. It is easy to get lost in the maze of competing barbarisms: deconstruction, structuralism, postcolonialism, and queer theory for breakfast, postdeconstructive cultural studies and Lacanian feminism for lunch. Who can say what will be served up for dinner? The menu is endless, and endlessly hermetic. And yet there are recurrent themes, arguments, and attitudes. Above all there are unifying suppositions, often only half-articulated, about the stability of human nature, the meaning of tradition, the scope and criteria of knowledge. In an appendix to his Essays on European Literature, Curtius again provides a striking counter-example to today’s received wisdom when he speaks of the “essential connection between love and knowledge” and emphasizes the critic’s receptivity, his subservience to the reality he seeks to understand. “Reception is the essential condition for perception,” Curtius writes, “and this then leads to conception.” At a time when the hubris of the critic is matched only by the fatuousness of his theories, Curtius’s insistence that knowledge owes a perpetual debt to reality—that scholarship, as he puts it, “must always remain objective”—sounds a refreshingly discordant note.

In some ways, Curtius’s reflections on the responsibilities of scholarship are—or would once have been—commonplace. But they touch on a matter of critical importance for anyone concerned with the future of the European past. Behind Curtius’s insistence on the essentially receptive attitude of the critic is an acknowledgment that reality ultimately transcends our efforts to master it. It is reality that speaks to us, not we who lecture it. At the same time, Curtius’s entire commitment to scholarship and “the preservation of Western culture” rests on a faith that the search for truth is not futile. If we must wait upon truth, we do not wait in vain. This twofold attitude—an acceptance of human limitation together with an affirmation of human capability— underlies his scholarly endeavor and links it to the great humanistic tradition of which he is a late, eloquent spokesman. It is also, finally, what sets him at odds with the enemies of that tradition.

There are two large questions at issue in this conflict: the relation between truth and cultural value, and the authority of tradition as the custodian of mankind’s spiritual aspirations.

Of the many things that have characterized the main current of European culture, perhaps none has been more central than faith in the liberating power of truth. At least since Plato described truth as the “food of the soul” and linked it to the idea of the good, truth has had, in the West, a normative as well as an intellectual component. In this sense, knowing the truth has been held to be not only a matter of comprehension but also enlightenment, its attainment a moral as well as a cognitive achievement. The habit of judging “according to right reason,” in Aristotle’s formula, was seen to be as much an expression of character as intelligence. Saying yes to the truth involved ascent as much as assent. Philosophy, the “love of wisdom,” proposed freedom not simply from ignorance but also, centrally, from illusion. “You shall know the truth,” the Gospel of St. John tells us, “and the truth shall set you free.” The optimism inherent in this imperative underlies not only the main springs of Western religion but also the development of its science and political institutions from the time of the Greeks through the Enlightenment and beyond.

Of course, this edifying picture has had plenty of competition. “What is truth?” asked Pontius Pilate as he washed his hands, thus providing a role model for countless generations of cynics. At the end of The Republic, Socrates says that the philosopher’s “main concern” should be “to make a reasoned choice between the better and the worse life, with reference to the nature of the soul.” But earlier in that dialogue he admits that many who devote themselves too single-mindedly to philosophy become cranks if not, indeed, pamponērous, “thoroughly depraved.” The character of Thrasymachus, taunting Socrates with the declaration that “justice is nothing but the advantage of the stronger,” provides an enduring image of the kind—or one of the kinds—of moral nihilist whose influence Plato feared.

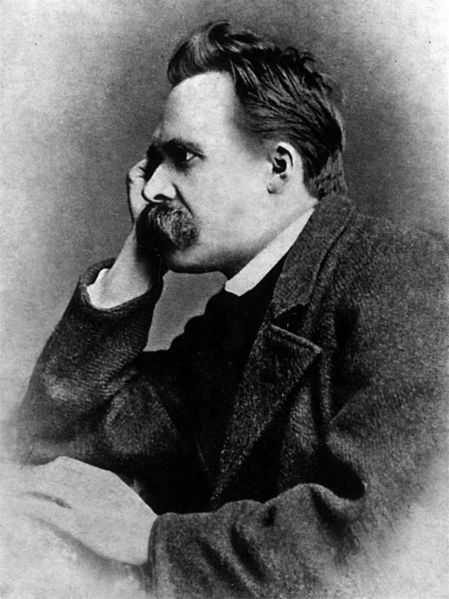

In the modern age, no one has carried forward Thrasymachus’s challenge more subtly or more radically than Friedrich Nietzsche. In a celebrated epigram, Nietzsche wrote that “we have art lest we perish from the truth.” His disturbing thought was that art, with its fondness for illusion and make-believe, did not so much grace life as provide grateful distraction from life’s horrors. But Nietzsche’s real radicalism came in the way that he attempted to read life against truth. Inverting the Platonic-Christian doctrine that linked truth with the good and the beautiful, he declared truth to be “ugly.” Suspecting that “the will to truth might be a concealed will to death,” Nietzsche boldly demanded that “the value of truth must for once be experimentally called into question.” This is as it were the moral source of all those famous Nietzschean formulae about truth and knowledge—that “there are no facts, only interpretations,” that “to tell the truth is simply to lie according to a fixed convention,” etc. Indeed, as Nietzsche recognized, his effort to provide a genealogy of truth led directly “back to the moral problem: Why have morality at all when life, nature, and history are ‘not moral’?”

Nietzsche’s influence on contemporary intellectual life cannot be overstated. “I am dynamite,” Nietzsche declared shortly before sinking into irretrievable madness. He was right. In one way or another, his example is an indispensable background to almost every destructive intellectual movement this century has witnessed. As the philosopher Richard Rorty enthusiastically put it, “it was Nietzsche who first explicitly suggested that we drop the whole idea of ‘knowing the truth.’ ”

It can be argued that part of Nietzsche’s influence has been due to misunderstanding. It was not entirely Nietzsche’s fault, for example, that he became in effect the house philosopher for the Nazis. But it is not possible to exonerate Nietzsche entirely on that score, either. He would have been repelled by National Socialism, had he lived to see it, especially its anti-Semitism and lumpen elements. But his consistent glorification of violence, his doctrine of “the will to power,” his distinction between “master” and “slave” morality, his image of the Ubermensch: they all played so neatly into the Nazis’ hands precisely because their brutality nicely answered to the brutality of the Nazis’ requirements. Even if Nietzsche meant something different from what the Nazis meant, there was an element of like appealing to like when it came to their use of his rhetoric.

Nietzsche’s intellectual and moral influence on our contemporaries betrays similar complexities. One imagines that Nietzsche would have loathed such poseurs as Jacques Derrida and Michel Foucault (to name only two). Such bad taste! Such bad writing! But their philosophies are inconceivable without Nietzsche’s example. And, once again, there is a great deal in Nietzsche’s thought that answers to the requirements of deconstruction, poststructuralism, and their many offshoots and permutations. Nietzsche’s obsession with power once again is central, in particular his subjugation of truth to scenarios of power. (Foucault’s insistence that truth is always a coefficient of “regimes of power” is simply Nietzsche done over in black leather.) And where would the deconstructionists be without Nietzsche’s endlessly quoted declaration that truth is “a moveable host of metaphors, metonymies, and anthropomorphisms.” A deconstructionist without the word “metonymy” is a pitiful thing, like a dog missing its favorite bone.

The week in culture.

Recommendations from the editors of The New Criterion, delivered directly to your inbox.

Conceptually, such Nietzsche-isms as “everything praised as moral is identical in essence with everything immoral” add little to the message that Thrasymachus had for us twenty-five hundred years ago. They are the predictable product of nominalism and the desire to say something shocking, a perennial combination among the impatient. Nietzsche’s real radicalism arises from the grandiosity of his hubris. His militant “God is dead” atheism had its corollary: the dream of absolute self-creation, of a new sort of human being strong enough to dispense with inherited morality and create, in Nietzsche’s phrase, its “own new tables of what is good.” This ambition is at the root of Nietzsche’s ultimate goal of effecting a “transvaluation of all values.” It is also what makes his philosophy such an efficient solvent of traditional moral thinking.

At least, that is the substance of what makes Nietzsche philosophical “dynamite.” The detonator is supplied by Nietzsche’s remarkable style. As a stylist Nietzsche had many faults. His rhetoric can be as grandiose as his megalomania. But almost everyone agrees that Nietzsche was an extraordinarily seductive, if sometimes hectoring, writer. And it must be said, too, that Nietzsche’s rhetorical excesses were generally at the service of an abiding seriousness about deep issues. He may have been wrong about a great many things—about the most important things—but he was never frivolous. Unfortunately, many of Nietzsche’s imitators and disciples have copied the extravagance of his manner while neglecting his ultimately serious purpose. Indeed, his example has done a great deal to enfranchise frivolity as an acceptable academic tic. When combined with the polysyllabic portentousness of Nietzsche’s disciple Martin Heidegger—another major influence on the style of deconstruction—the effect is deadly: portentous frivolity, the worst of both worlds. Nietzsche once wrote that “one does not only wish to be understood when one writes, one wishes just as surely not to be understood.” How he would have rued that sentence had he foreseen the rebarbative quality of the academic prose his writings helped to inspire!

One could easily fill a book—many books—with examples of the most extreme sort: they are the stuff that Ph.D. theses in the humanities are made of today. But let us confine ourselves to a couple of characteristic examples from one of Nietzsche’s most influential heirs, the French deconstructionist Jacques Derrida. Inspired by Nietzsche, Derrida is always talking about “games” and “play.” So he begins his book Dissemination with this sentence: “This (therefore) will not have been a book.” “Therefore,” indeed. This is followed by a … no, not an explanation, but a sort of exfoliation, a luxurience of verbal curlicues designed not to clear things up but to festoon the initial obscurity with cleverness. Such “games” pall quickly, of course, and so Derrida, like so many of his epigoni, tries to spice things up by injecting sex-talk wherever possible He can’t mention Rousseau without dilating on masturbation, reflect on writing without bringing in incest, and so on. It’s what D. H. Lawrence called sex in the head: an academic’s frenetically anemic effort to demonstrate that there is blood in his (or her) veins.

It can all be pretty distasteful. But the real obscenity is practiced upon language. Here is a relatively mild passage from the beginning of “Plato’s Pharmacy,” Derrida’s famous essay on the Phaedrus:

A text is not a text unless it hides from the first comer, from the first glance, the law of its composition and the rules of its game. A text remains, moreover, forever imperceptible. Its law and its rules are not, however, harbored in the inaccessibility of a secret; it is simply that they can never be booked, in the present, into anything that could rigorously be called a perception. … If reading and writing are one, as is easily thought these days, if reading is writing, this oneness designates neither undifferentiated (con)fusion nor identity at perfect rest; the is that couples reading with writing must rip apart.

One must then, in a single gesture, but doubled, read and write. And that person would have understood nothing of the game who, at this, would feel himself authorized merely to add on; that is, to add any old thing. … The reading or writing supplement must be rigorously prescribed, but by the necessities of a game, by the logic of play, signs to which the system of all textual powers must be accorded and attuned.

Perhaps this is the appropriate place to recall Chesterton’s definition of madness as “using mental activity so as to reach mental helplessness.” If one penetrates behind the skeins of Derrida’s rhetoric, a moment’s thought will show that the “rigorously prescribed” logic of his play is completely short-circuited by a simple fact: that reading is not, as it happens, the same thing as writing. Never was, never will be.

But that is the way it is with deconstruction. Derrida’s most famous sentence is undoubtedly il n’y a pas de hors-texte, “there is nothing outside the text.” This is shorthand for denying that words can refer to a reality beyond words, for denying that truth has its measure in something beyond the web of our language games. It is, of course, a simple matter to say “There is nothing outside the text.” It has a clever, radical sound to it. But is it true? If so, then there is at least one thing “outside” the text, namely the truth of the statement “There is nothing outside the text.”

Many people still believe that there is such a thing as truth independent of their thoughts.

It is the same with all such pronouncements: “There is no such thing as literal meaning” (Stanley Fish), “All readings are misreadings” (Jonathan Culler), “Truth … cannot exist independently of the human mind” (Richard Rorty). Or, in slightly less staccato form, here is Fredric Jameson:

The very problem of a relationship between thoughts and words betrays a metaphysics of “presence,” and implies an illusion that universal substances exist, in which we come face to face once and for all with objects; that meanings exist, such that it ought to be possible to “decide” whether they are initially verbal or not; that there is such a thing as knowledge which one can acquire in some tangible and permanent way.

Not only are all these statements essentially self-refuting—does Stanley Fish literally mean “There is no such thing as literal meaning”?—but they also have the handicap of being continually refuted by experience. When Derrida leaves Plato’s pharmacy and goes into a Parisian one, he depends mightily on the fact that there is an outside to language, that when he asks for aspirine he will not be given arsenic instead.

A great deal of Derrida’s philosophy depends on the linguistic accident that the French verb différer means both “to differ” and “to defer.” Out of this pedestrian fact he has spun a dizzying argument, the upshot of which is that the meaning of any text is perpetually put off, deferred. But, of course, if some unscrupulous pharmacist were to substitute arsenic for aspirin, Derrida would learn to reconnect the signifier with the signified right speedily, and he would lament, in the time that remained to him, his apostasy from the logocentric “nostalgia” for the “metaphysics of presence.”

It is often pointed out today that deconstruction and structuralism are old hat, that the parade of academic fashion has passed on to other entertainments: cultural studies and various neo-Marxist hybrids of “theory” and the politics of grievance. This is partly true. It is certainly the case that the terms “deconstruction” and “structuralism” no longer have the cachet they possessed a decade ago. Nor does the name “Derrida” automatically produce the reverence and wonder among graduate students that it once did. (“Foucault,” however, seems to have retained its talismanic charm.) There are basically two reasons for this. The first has to do with the late Paul de Man, the Belgium-born Yale professor of comparative literature, who in addition to being one of the most prominent practitioners of deconstruction, was—it was revealed in the late 1980s—an enthusiastic contributor to Nazi newspapers during World War II. That discovery, and above all the flood of obscurantist mendacity disgorged by the deconstructionist-structuralist brotherhood—not least by Derrida himself—to exonerate de Man, has cast a permanent shadow over deconstruction’s status as a widely accepted instrument of intellectual liberation. The second reason is simply that, like any academic fashion, deconstruction’s methods and vocabulary, once so novel and forbidding, have gradually become part of the common coin of academic discourse.

In fact, though, this very process of assimilation has assured the continuing influence of deconstruction and structuralism. The terms “deconstruction” and “structuralism” are not invoked as regularly today as they once were; but their fundamental ideas about language, truth, and morality are more widespread than ever. Once at home mostly in philosophy and literature departments, their nihilistic tenets are cropping up further and further afield: in departments of history, sociology, political science, and architecture; in law schools and—God help us—business schools; and, outside the academy, in museums and other cultural institutions. Indeed, deconstructive themes and presuppositions have increasingly become part of the general intellectual atmosphere: absorbed to such an extent that, like the ideas of psychoanalysis, they float almost unnoticed, part of the ambient spiritual pollution of our time.

Though the language of deconstruction and structuralism is barbarous, the appeal of the doctrines is not hard to understand. It is basically a Nietzschean appeal. As the English philosopher and novelist Iris Murdoch observed, “the new anti-metaphysical metaphysic promises to unburden the intellectuals and set them free to play. Man has now ‘come of age’ and is strong enough to get rid of his past.”

That, at least, is the idea—the promise issued but, like meaning in a deconstructionist’s fantasy, always deferred. As Murdoch notes, part of what is objectionable in the deconstructivist-structuralist ethos “is the damage done to other modes of thinking and to literature.” Dissolving everything in a sea of unanchored signifiers, deconstruction encourages us to blur fundamental distinctions: distinctions between intellectual disciplines, between fact and fiction, between right and wrong. Because there is “no such thing” as intrinsic value, there is at bottom no reason to respect the integrity of literature, philosophy, science, or any other intellectual pursuit. All become fodder for the deconstructionist’s “play”—or perhaps “folly” would be a more appropriate term. The crucial thing that is lost is truth: the ideal, in Murdoch’s words, of “language as truthful, where ‘truthful’ means faithful to, engaging intelligently and responsibly with, a reality which is beyond us.”

Whether our culture really is postreligious is still very much an open question.

Deconstruction is an evasion of reality. In this sense it may be described as a reactionary force. But because deconstruction operates by subversion, its evasions are at the same time an attack: an attack on the cogency of language and the moral and intellectual claims that language has codified in tradition. The subversive element inherent in the deconstructive enterprise is another reason that it has exercised such a mesmerizing spell on intellectuals eager to demonstrate their radical bona fides. Because it attacks the intellectual foundations of the established order, deconstruction promises its adherents not only an emancipation from the responsibilities of truth but also the prospect of engaging in a species of radical activism. A blow against the legitimacy of language, they imagine, is at the same time a blow against the legitimacy of the tradition in which language lives and has meaning. They are not mistaken about this. For it is by undercutting the idea of truth that the decontructionist also undercuts the idea of value, including established social, moral, and political values. And it is here, as Murdoch points out, that “the deep affinity, the holding hands under the table, between structuralism and Marxism becomes intelligible.” Most deconstructionists would seem to be unlikely revolutionaries; but their attack on the inherited values of our culture are as radical and potentially destabilizing as anything devised by Mr. Molotov.

The deconstructionist impulse comes in a variety of flavors, from bitter to cloying, and can be made to serve a wide range of philosophical outlooks. That is part of what makes it so dangerous. One of the most beguiling and influential American deconstructionists is Richard Rorty. Once upon a time, Rorty was a serious analytic philosopher. Since the late 1970s, however, he has increasingly busied himself explaining why philosophy must jettison its concern with outmoded things like truth and human nature. Instead, philosophy should turn itself into a form of literature or—as he sometimes puts it—“fantasizing.” He is set on “blurring the literature-philosophy distinction and promoting the idea of a seamless, undifferentiated ‘general text,’ ” in which, say, Aristotle’s Metaphysics, a television program, and a French novel might coalesce into a fit object of hermeneutical scrutiny. Thus it is that Rorty believes that “the novel, the movie, and the TV program have, gradually but steadily, replaced the sermon and the treatise as the principal vehicles of moral change and progress.” (One does not, incidentally, have to believe that sermons or treatises were ever “the principal vehicles of moral change and progress” to be convinced that novels, movies, and TV programs are nothing of the sort now.)

As almost goes without saying, Rorty’s attack on philosophy and his celebration of culture as an “undifferentiated ‘general text’” have earned him many honors. In the early 1980s, he left his professorship at Princeton for an even grander one at the University of Virginia; he is the recipient of a MacArthur Foundation “genius” award; and he has lately emerged as one of those “all-purpose intellectuals … ready to offer a view on pretty much anything” that he extols in his book Consequences of Pragmatism (1982).

Rorty has written enthusiastically about Derrida as someone who “simply drops theory” for the sake of amoral “fantasizing” about his philosophical predecessors, “playing with them, giving free rein to the trains of association they produce.” He himself strives to follow this procedure. He does not, however, call himself a deconstructionist. That might be too off-putting. Instead, he calls himself a “pragmatist” or, more recently, a “liberal ironist.” What he wants, as he explained in Philosophy and the Mirror of Nature (1979), his first foray into postphilosophical waters, is “philosophy without epistemology,” that is, philosophy without truth, and especially without Truth with a capital T.

In brief, Rorty wants a philosophy (if we can still call it that) which “aims at continuing the conversation rather than at discovering truth.” He can manage to abide “truths” with a small t and in the plural: truths that we don’t take too seriously and wouldn’t dream of foisting upon others: truths, in others words, that are true merely by linguistic convention. In a word, truths that are not true. What he cannot bear—and cannot bear to have us bear—is the idea of Truth that is somehow more than that.

Rorty generally tries to maintain a chummy, easygoing persona. This is consistent with his role as a “liberal ironist,” i.e., someone who thinks that “cruelty is the worst thing we can do” (the liberal part) but who, believing that moral values are utterly contingent, thinks that what counts as “cruelty” is a sociological or linguistic construct. (This is where the irony comes in: “I do not think,” he writes, “there are any plain moral facts out there … nor any neutral ground on which to stand and argue that either torture or kindness are [sic] preferable to the other.”) Accordingly, one thing that is certain to earn Rorty’s contempt is the spectacle of someone without sufficient contempt for the truth. “You can still find philosophy professors,” he tells us witheringly, “who will solemnly tell you that they are seeking the truth, not just a story or a consensus but an honest-to-God, down-home, accurate representation of the way the world is.” That’s the problem with liberal ironists: they are ironical about everything except their own irony, and are serious about tolerating everything except seriousness.

As Rorty is quick to point out, the “bedrock metaphysical issue” here is whether we have any non-linguistic access to reality. Does language “go all the way down” (il n’y a pas de hors-texte)? Or does language point to a reality beyond itself, a reality that exercises a legitimate claim on our attention and provides a measure and limit for our descriptions of the world? In other words, is truth something that we invent? Or something that we discover? The main current of Western culture has overwhelmingly endorsed the latter view. For example, the “receptivity” that Curtius insisted upon for the critic is unintelligible without some such presupposition. But Rorty wants us to drop “the notion of truth as correspondence with reality altogether” and realize that there is “no difference that makes a difference” between the statement “it works because it’s true” and “it’s true because it works.” He tells us that “Sentences like … ‘Truth is independent of the human mind’ are simply platitudes used to inculcate … the common sense of the West.” Of course, Rorty is right that such sentences “inculcate … the common sense of the West.” He is even right that they are “platitudes.” The statement “The sun rises in the East” is another such platitude. But even platitudes can be true.

Rorty cavalierly tells us that he does “not have much use for notions like ‘objective value’ and ‘objective truth.’” But then the list of things that Rorty does not have much use for is very long. For example, he wants us to get rid of the idea that “the self or the world has an intrinsic nature” because it is “a remnant of the idea that the world is a divine creation.” Since “socialization” (like language) “goes all the way down,” there is for Rorty no such thing as a self apart from the social roles it inhabits: “the word ‘I,’” he writes, “is as hollow as the word ‘death.’” (“Death,” he assures us, is an “empty” term.)

Rorty looks forward to a culture—he calls it a “liberal utopia”—in which the “Nietzschean metaphors” of self-creation are finally “literalized,” i.e., made real. For philosophers, or people who used to be philosophers, this would mean a culture that “took for granted that philosophical problems are as temporary as poetic problems, that there are no problems which bind the generations together in a single natural kind called ‘humanity.’ ” (“Humanity” is another one of those notions that Rorty cannot think about without scare quotes.)

The Enlightenment sought to emancipate man by liberating reason; but it has turned out that reason liberated from tradition grows rancorous and irrational.

Rorty recognizes that most people (“most nonintellectuals”) are not yet liberal ironists. Many people still believe that there is such a thing as truth independent of their thoughts. Some even continue to entertain the idea that their identity is more than a distillate of biological and sociological accidents. Rorty knows this. Whether he also knows that his own position as a liberal ironist crucially depends on most people being non-ironists is another question. One suspects not. In any event, he is clearly impatient with what he refers to as “a particular historically conditioned and possibly transient” view of the world, i.e., the pre-ironical view for which things like truth and morality still matter. Rorty, in short, is a connoisseur of contempt. He could hardly be more explicit about this. He tells us in the friendliest possible way that he wants us to “get to the point where we no longer worship anything, where we treat nothing as a quasi divinity, where we treat everything—our language, our conscience, our community—as a product of time and chance.”

In his book Overcoming Law (1995), the jurist and legal philosopher Richard Posner criticizes Rorty for his “deficient sense of fact” and “his belief in the plasticity of human nature,” noting that both are “typical of modern philosophy.” They are typical, anyway, of certain influential strains of modern philosophy. And it is in the union of these two things—a deficient sense of fact and a belief in the plasticity of human nature—that Nietzsche’s legacy bears its most poisonous fruit. When Rorty, expatiating on the delights of his liberal utopia, says that “a postmetaphysical culture seems to me no more impossible than a postreligious one, and equally desirable,” he perhaps speaks truer than he purposed. For despite the tenacity of non-irony in many sections of society, there is much in our culture—the culture of Europe writ large—that shows the disastrous effects of Nietzsche’s dream of a postmetaphysical, ironized society of putative self-creators. And to say that such a society would be “equally desirable” as a postreligious one is also to say that it would be equally undesirable.

Whether our culture really is postreligious is still very much an open question. That liberal ironists such as Richard Rorty make do without religion does not tell us very much about the matter. In an essay called “The Self-Poisoning of the Open Society” the Polish philosopher Leszek Kolakowski observed that the idea that there are no fundamental disputes about moral and spiritual values is “an intellectualist self-delusion, a half-conscious inclination by Western academics to treat the values they acquired from their liberal education as something natural, innate, corresponding to the normal disposition of human nature.” Since liberal ironists such as Richard Rorty do not believe that anything is natural or innate, Kolakowski’s observation has to be slightly modified to fit him. But his general point remains, namely that “the net result of education freed of authority, tradition, and dogma is moral nihilism.” Kolakowski readily admits that the belief in a unique core of personality “is not a scientifically provable truth.” But he argues that, “without this belief, the notion of personal dignity and of human rights is an arbitrary concoction, suspended in the void, indefensible, easy to be dismissed,” and hence prey to totalitarian doctrines.

The Promethean dreams of writers such as Derrida and Rorty depend critically on their denying the reality of anything that transcends the prerogatives of their efforts at self-creation. Traditionally, the recognition of such realities has been linked with a recognition of the sacred. And in this sense, as Kolakowski argues in “The Revenge of the Sacred in Secular Culture,” “culture, when it loses its sacred sense, loses all sense.” He continues:

With the disappearance of the sacred, which imposed limits to the perfection that could be attained by the profane, arises one of the most dangerous illusions of our civilization—the illusion that there are no limits to the changes that human life can undergo, that society is “in principle” an endlessly flexible thing, and that to deny this flexibility and this perfectibility is to deny man’s total autonomy and thus to deny man himself.

It is a curious irony that self-creators from Nietzsche through Rorty are reluctant children of the Enlightenment. The Enlightenment sought to emancipate man by liberating reason; but it has turned out that reason liberated from tradition grows rancorous and irrational. True, we would not wish to do without the benefits of the Enlightenment. As the sociologist Edward Shils pointed out in Tradition (1981), the Enlightenment’s “tradition of emancipation from traditions is … among the precious achievements of our civilization. It has made citizens out of slaves and serfs. It has opened the imagination and the reason of human beings.” Nevertheless, to the extent that it turns against the tradition that gives rise to it, Enlightenment rationalism degenerates into a force destructive of culture and the manifold directives that culture has bequeathed us. As Shils goes on to observe, “the destruction or discrediting of these cognitive, moral, metaphysical, and technical charts is a step into chaos. Destructive criticism which is an extension of reasoned criticism, aggravated by hatred, annuls the benefits of reason and restrained emancipation.” In Philosophical Investigations, Wittgenstein remarked that “all philosophical problems have the form ‘I have lost my way.’” Today, when so much of intellectual life has degenerated into an experiment against reality, perhaps the primary task that faces us is recovering a sense that many of the liberations we crave serve chiefly to compound the depth of our loss.