It is almost trite to observe that a free society cannot exist without the rule of law. But on the matter of liberty, law is, at best, highly overrated. In fact, it can be downright pernicious.

As Kevin Williamson has argued, real liberty is evolutionary. Free societies are dynamic, efficient, and innovative. Law, by contrast, can become the paralyzing debris that de Tocqueville predicted might someday cover the surface of modern democratic society. It is the “network of small complicated rules, minute and uniform, through which the most original minds and the most energetic characters cannot penetrate, to rise above the crowd.”

If the modern welfare state softens, bends, and usurps the will of man, law is the mechanism by which—to borrow again from de Tocqueville—it “compresses, enervates, extinguishes, and stupefies a people, till each nation is reduced to nothing better than a flock of timid and industrious animals, of which the government is the shepherd.”

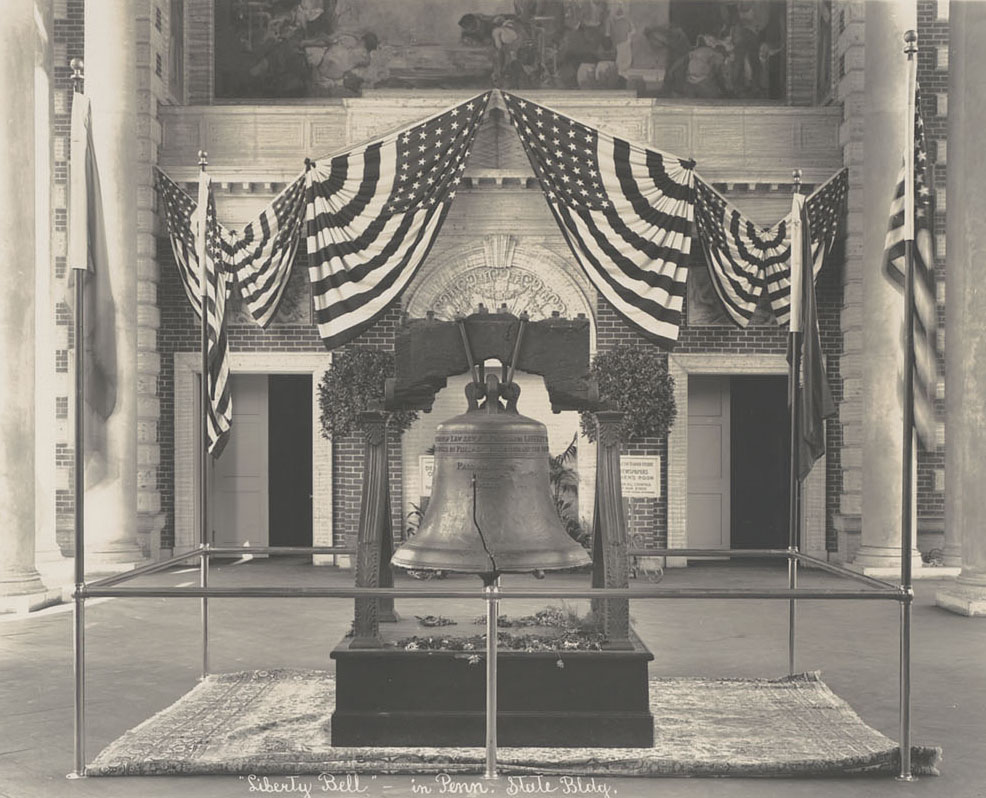

To be sure, law is a pillar of liberty—there can be no liberty without it. But law can never be the foundation of a free society—that role is reserved for culture. And if law is to enable freedom rather than impede it, it must be a very particular kind of law: the kind that regulates government—that reins in state authority. When law empowers government, when its tendency is to become our willful master rather than our defense against oppression, such law is the enemy of freedom.

Law